VM FAQs

Frequently asked questions about VMs and VM configurations in Orka by MacStadium.

Orka 2.4.x content

This page has not been updated to reflect the changes introduced in Orka 3.0. Some of the information might be outdated or incorrect. Use 2.4.x to 3.0.0: API Mapping and 2.4.x to 3.0.0: CLI Mapping to figure out the correct endpoints and commands.

Quick navigation

Jump to: macOS support | Sharing | Networking | Deployment | Container development | Nested virtualization | Troubleshooting

macOS support

Does Orka support Big Sur?

Yes. Starting with Orka v1.4.2, you can spin up Orka VMs with macOS Big Sur.

Stay tuned

Currently, MacStadium does not provide a macOS Big Sur image or ISO. You can create or supply your own image, or upload an ISO yourself. See here.

MacStadium will provide resources for spinning up macOS Big Sur VMs in the future.

Sharing

Are VMs and VM configurations shared across users in the same Orka environment?

When working with the Orka CLI or the RESTful API, users work in isolation even if they share the same Orka environment. As a result, in the CLI and the RESTful API, users have access only to the VM configurations and VMs they created themselves.

Outside of the Orka CLI and the RESTful API, users can still utilize the VMs created by others in their shared Orka environment. Proper networking and VM configuration must be in place.

In future versions of the Orka CLI and RESTful API, users with a sufficient role and permissions might be able to see and work with VMs created by others in their environment.

Networking

What ports are exposed on my VMs in Orka?

By default, every VM deployed in Orka comes with 3 exposed ports: SSH, VNC, and the Screen Sharing port.

How do I use the ports of my VMs?

You can forward traffic to a specified port on your VMs (specify the --ports flag during deployment). You need to map a free port from the node that hosts the VM to a free port on the VM. The port on the node will listen for requests and then forward them to the respective port on the respective VM. See Forward Traffic to a Specific VM Port.

If you want to forward traffic to specific ports on an already deployed VM, you need to use Kubernetes Services to map the node's IPs and ports to the ports on your macOS VM. See Kubernetes Documentation: Defining a Service.

How do I connect to my VM via VNC?

You can get the IP and VNC port for your VM by running orka vm list or orka vm status. Enter them in a VNC client of your choice (for example, RealVNC or VNC Viewer).

See VNC to a VM.

How do I connect to my VM via SSH?

On the VM, enable remote login.

Next, configure your SSH connection to access the VM on the proper port. VMs in Orka run on their own private subnet and are NAT'd from the host IP by incrementing ports for each new VM. The starting port is 8822.

How should I approach firewall whitelisting to ensure traffic to and from my VMs?

We recommend whitelisting twice the number of VMs you expect to be running. For example, if you plan to use 24 VMs, routinely destroying and bringing them up, you need to whitelist a 48-port range for each port type (5900-5948, 5999-6047, 8822-8870).

How do I disable VNC on my VM?

By default, Orka deploys your VMs with enabled VNC. You can disable the VNC console when creating a VM configuration or during deployment with the --no-vnc-console flag. You will not be able to re-enable it on this VM. If you need to, you can re-deploy the VM configuration with enabled VNC (--vnc-console yes).

Do Orka VMs have normal network access?

The networking and connectivity setup of Orka environments (and the respective VMs) is the standard setup provided by MacStadium.

Every environment sits behind a Cisco ASAv firewall. The environment and the resources in it are accessible via VPN. You can use the Cisco AnyConnect VPN client (provided with the firewall), another VPN client of your choice, or you can configure VPN connectivity between Orka and another cloud provider.

Can I specify a private IP for the VM when I spin it up?

You cannot assign a private IP during deployment - Orka does that for you. After the deployment completes, you can configure your VM networking and you can adjust your pod security policies.

Deployment

Can I deploy a macOS VM with a .yml?

.yml?No. You need to use the Orka CLI, the Orka API, or the Orka web UI.

Orka is a customized namespace where you can't control deployments with kubectl and *.yml files. For your deployments, Orka creates a specialized pod descriptor and deploys it.

Container development

How do I test my containers in Orka? For example, can I download them locally and test them?

All container (VM configuration and VM) development and testing happen in Orka. You can create as many VM configurations and VMs as you need under as many users as you need. For versioning, you can use the save and commit operations.

You cannot download, develop and test a VM configuration or VM locally or outside of Orka.

Is Orka a private fork of Docker? Are Orka VMs true Docker containers?

No. Orka is not a private fork of Docker and Orka macOS VMs are not true Docker containers. Each Orka VM is something between a Docker image and a full weight VM.

The Docker container is a shell that wraps around the macOS VM so that it can be orchestrated with Kubernetes.

Furthermore, the Orka macOS images are not exactly Docker images. They are full macOS. The lightweight split comes from Orka's take on storage and differentials. When you deploy a VM, Orka pulls a differential of the base image into a Docker container that looks, acts, and feels like a lightweight Docker container. In reality, it's similar to a link clone VM or an instant clone VM, that has been containerized and then orchestrated with Kubernetes.

Nested virtualization

Can I run other virtualization on my macOS Orka VMs? (For example, Docker containers)

Starting with Orka 1.1.0, Orka VMs support nested virtualization on Intel-based nodes. This feature is available upon request.

This feature is not available on Apple Silicon-based nodes.

This is a BETA feature.

Troubleshooting

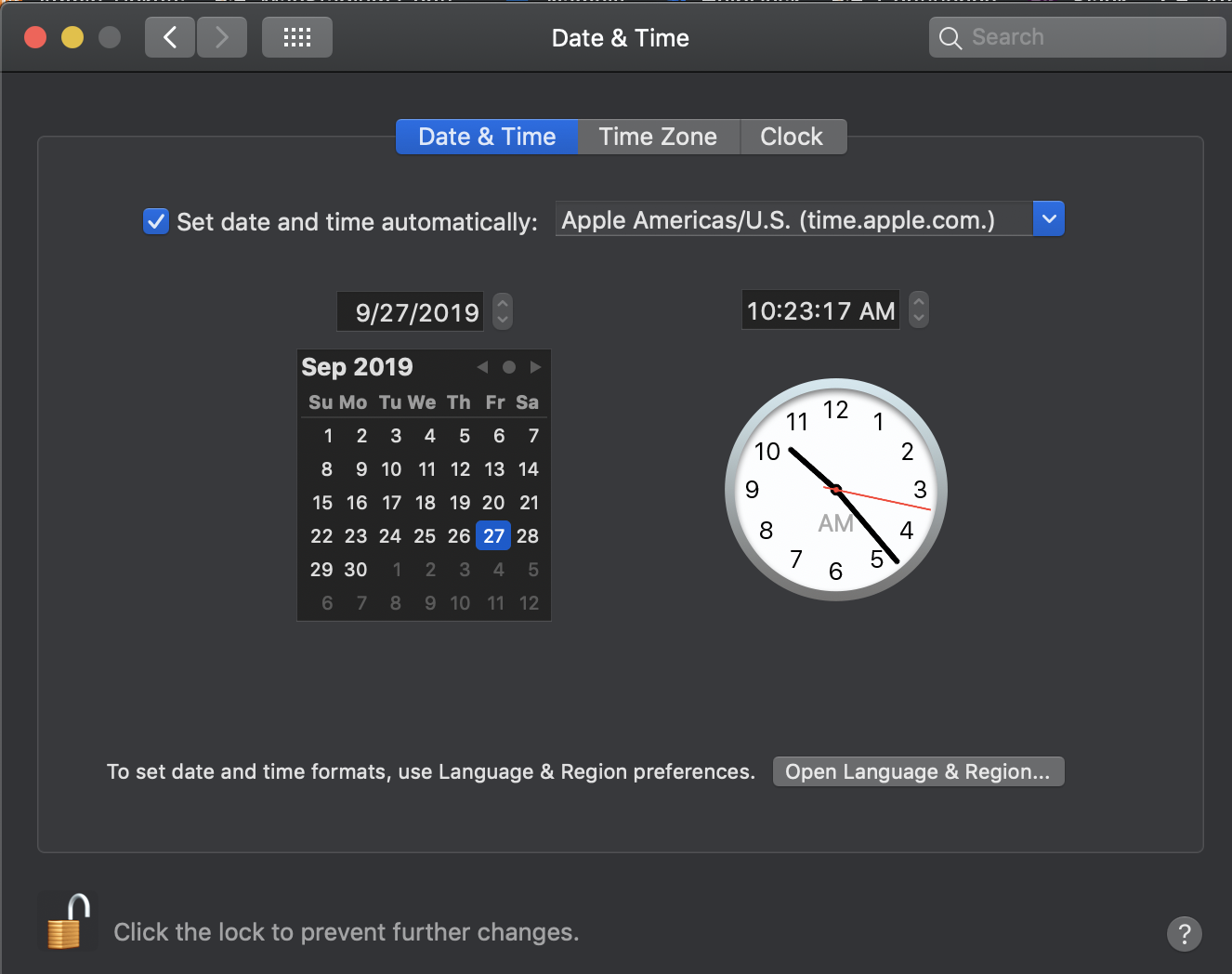

My VM clock is now off, why is that?

macOS VMs are more prone than a traditional macOS installation to experience clock drift. There are two methods for fixing this:

- From the command line:

Connect to your VM via VNC and run the following command in the Terminal:

sudo sntp -sS time.apple.com

- From the UI

Connect to your VM via VNC and navigate to System Preferences > Date & Time. Make sure that the Set date and time automatically checkbox and the time.apple.com source are selected. Save your changes to force a sync.

Why is my VM in a Stopped state?

In Kubernetes, when a VM fails to boot, it becomes stopped immediately. Your VM might have failed to boot in the following cases:

-

The base image used by the VM is corrupted. A base image might become corrupted after an unsuccessful

saveorcommitoperation.

In this case, re-create your original VM configuration using another image and deploy it. -

The VM has an ISO attached and the ISO is already in use. Orka applies a read-only lock to ISOs in use. After using an ISO to set up a VM, the new VM needs to be saved with 'orka image save', then rebooted with the new image name. This removes the read-only lock on the ISO.

Make sure you delete any additional VMs trying to pull from this ISO before you apply thesave.orka image saveappliesorka vm stopbefore saving which removes the read-only lock and Kubernetes immediately lets a different VM use the ISO. This interferes with thesaveoperation in progress and corrupts the VM you are trying to save. -

The VM was created with an empty base image and no ISO attached.

In this case, re-deploy the VM but make sure to attach an ISO during the deployment. For more information, see Working with ISOs for the Orka CLI or Deploy VM configuration for the Orka API.

My VM is an ERROR state, what should I do?

The ERROR state indicates a critical issue with your VM instance. You will not be able to restore your VM. To free its resources, you need to delete it by its ID because deleting by name might not always work.

If you're working from the Orka CLI or the Orka API:

- Obtain the VM ID. (

orka vm listorGET /resources/vm/list) - Delete by ID. (

orka vm delete -v <VM_ID> -yor pass the VM ID in the body ofDEL /resources/vm/delete)

If you're working from the Orka Web UI:

- Go to the VMs page, select the instance in an

ERRORstate, and click More > Delete.

Updated over 1 year ago